LCLS

ML + LCLS

The LINAC Coherent Light Source (LCLS) is the world's first hard x-ray laser. A $1bn instrument, LCLS produces light that is bright enough (1012 photons), short enough (~20 fs pulses), and of the right wavelength (~1 Å) to image chemistry, biomolecules, plasmas, and materials. Check out the LCLS youtube feed for some highlights of the science done at LCLS.

Typical experiments at LCLS are conduced over the course of 5 days by outside user groups. Users arrive and work together in intense 12-hour shifts to make their one-off experiments work. Two or more new experiments every week are typical. During a single experiment, with its 120 shots per second rate, up to order 100 TB of raw data will be collected in the form of images, vectors, and scalars. Making sense of all that data is a major challenge. The SLAC ML initiative aims to deploy the latest ML technology to help maximize the science potential of LCLS.

Compounding this challenge, SLAC is nearing completion a $1bn+ upgrade to LCLS, known as LCLS-II. Over the course of the next few years, the LCLS-II will ramp up operation to an ultimate goal of 1 million shots per second. Each second worth of experimentation would produce up to a TB of data... streaming... continuous... over a petabyte per hour. It is unreasonable to save such raw data to disk, so SLAC is developing hardware accelerated streaming data analysis methods in order to deal with this new paradigm: to reduce the data to a minimal representation of a managable size that preserves the information contained in the data while minimizing extraneous noise and systematic and known structures. Doing so in real time and adapting to the changing experimental environments requires a different modality than common machine learning, one must develop hardware aware algorithms that are adaptable to the dynamic changes in source and user needs yet still allow for so-called "batch size = 1" inference throughput of 1M frames per second and model re-training or adaptation on the tens of minutes timescale.

To answer these challenges, the ML initiative is pursuing a number of ongoing research and development efforts:

Dealing with a Data Firehose: Data Reduction

At LCLS-II data rates, the transfer of raw data is unreasonable if not impossible. The development of ultra-low latency, high-throughput Edge Machine Learning (EdgeML) for use in the LCLS-II Data Reduction Pipeline (DRP) is focused on deploying ML models to all manner of EdgeML accelerator: FPGAs, NVidia GPUs, Graphcore IPUs, Coral EdgeTPUs, SambaNova RDUs, and GroqChips.

The principle is to distill the raw bits into discovery or actionable information already inside or directly attached to the detector electronics. These models will analyze the continuous stream of data, leaking validation and anomaly raw data at the 0.1% rate, discarding useless or known results, and mapping the 99.9% of the data feed to a reduced but information rich representation for downstream processes.

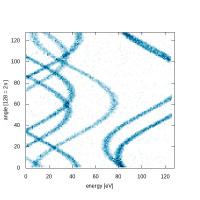

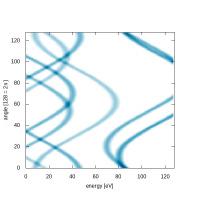

We are using two internally driven R&D projects as exemplars of the EdgeML paradigm: a multi-spectral imaging paradigm used in sub-fs spectrogram encoding of x-ray arrival time, and an angle resolving electron spectrometer used in both attosecond x-ray pulse shape recovery in molecular frame resolved photo-electron and Auger-Meitner spectroscopies. Both use case examples are targeting data ingestion rates in the 50 GB/s - 1 TB/s range.

Anomaly Detection: Finding the Interesting Needle in the Haystack

In the high data velocity environment of LCLS-II, it's only possible to save a small set of data. Data are chosen based on how we expect the data to look -- but what if those assumptions are wrong?

The most interesting scientific results are often completely unexpected -- we can't just look for what we expect from an experiment. Therefore, the ML group in partnership with LCLS and outside collaborators are developing various anomaly detection systems that will run online. Anomalous data will be saved to disc during running experiments for immediate inspection. Whether they capture interesting science or something that went wrong, experimenters at LCLS will be able to take immediate action.

Automated Crystallography: Learning from Past Data

Protein crystallography reveals the atomic scale of biology. At LCLS, we study

not only how proteins -- biology's nano-scale machines -- are built, but also how they

move. To do this, researchers obtain diffraction images with many peaks of various shap

es and sizes.

Lead by Chuck Yoon at LCLS, researchers are training neural networks on previously measured protein diffraction images so new ones can be automatically recognized and analyzed.

Photon Finding: Is that One Photon or Two?

Atom-scale dynamics in condensed matter underly many phenomena of interest: superconductivity, phase transitions, defect formation. Powerful techniques for measuring these dynamics across many decades (from fs to seconds) are therefore in great demand. One such technique is x-ray speckle correlation spectroscopy, XPCS. T

o perform XPCS measurements at the fastest timescales and on the smallest samples, we need to count every photon scattered by the sample. SLAC researchers are currently using ML methods to extract every photon from x-ray diffraction images to push the boundaries of what is possible in XPCS and other photon hungry experiments.

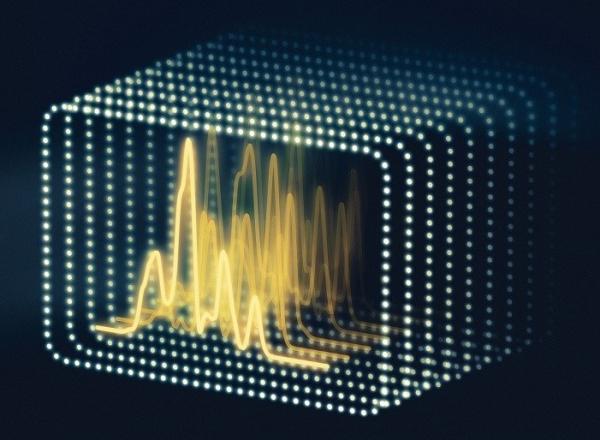

XFEL Ghost Imaging

In conventional imaging, light falling on an object produces a two-dimensional image on a detector – whether the back of your eye, the megapixel sensor in your cell phone or an advanced X-ray detector. Ghost imaging, on the other hand, constructs an image by analyzing how random patterns of light shining onto the object affect the total amount of light coming off the object. Using ghost imaging, we leverage the idea that measurement is easier than control, to reach sub-femtosecond pump probe times, ultrahigh spectral resolutions, or simpler data taking modes.